Introduction

Artificial intelligence (AI) is advancing quickly and is helping companies succeed beyond basic everyday operations. While data analysis has supported industry for decades, the current generation of AI moves beyond descriptive reporting to offer complex predictive, prescriptive, and quasi-autonomous strategic recommendations. This introduces unique governance challenges that previous business intelligence (BI) tools did not face. AI helps businesses work better, make fewer mistakes, and plan for the future more effectively (IBM, n.d.). If used carefully, AI can greatly boost the skills of boards and executive teams, leading to improved results for companies (Agnese, Arduino, & Di Prisco, 2025). To take full advantage of AI, companies must deal with important issues like algorithmic bias, keeping operations clear, and making sure they act ethically (Anwar, 2025). Presently, AI is becoming a tool for making important corporate decisions. As AI technology becomes smarter, it plays a bigger role in leading companies—a concept known as ‘algorithmic governance.’ While the use of automated systems for decision making is broadly known as algorithmic governance (Ustahaliloğlu, 2025), AI Governance refers specifically to the system of rules, policies, processes, and organizational structures that a firm implements to ensure its AI systems are used ethically, transparently, securely, and in alignment with its fiduciary duties and legal requirements. This approach is quickly changing many fields form government services to criminal justice system. AI technology is becoming more advanced day-by-day, but there are still not enough rules or guidelines on how companies should include it in their decision making and management processes (LegalFly, 2025). This gap induces high risk because companies are adopting new technologies like AI without clear ethical and/or legal directions (Mittelstadt, 2019). Without strong governance, there could be unexpected problems like biases in AI algorithms, legal troubles, and a loss of trust from clients and partners.

AI’s Potential Contributions to Board-Level Decision Making

Enhanced Risk Assessment and Mitigation

AI is great at examining large amounts of data, like market trends, financial documents, and social media buzz. Using AI, corporate governance processes like risk assessment, compliance monitoring, and financial reporting can be automated. This automation not only enhances efficiency but also reduces the probability of human errors (Djalovic, 2024). AI’s capability to find risks that people might not notice is unparalleled.

Strategic Forecasting and Scenario Planning

AI has the ability to create detailed scenarios and provide insights driven by data to assist in making strategic decisions. It works by analyzing past data and identifying patterns, enabling leaders to predict future trends in the market. This capability allows for the evaluation of various strategic options and the development of contingency plans.

For example, AI can simulate the impact of different pricing strategies on a company’s market share. It can also forecast how technological changes may alter industry dynamics (Makridakis, Spiliotis, & Assimakopoulos, 2018). Take, for instance, an energy company that uses AI to project future energy needs by factoring in climate change and advancements in technology. This allows the company to make informed decisions about where to invest in renewable energy sources.

Objective Performance Evaluation

AI can help provide fair performance evaluations of executives by using objective metrics and reducing the impact of subjective biases. When people handle executive evaluations, their judgments often get tangled up in biases—think halo effect, recency bias, all the usual suspects. They lean on gut feelings and subjective measures, which skews decisions about pay and who gets promoted (Pulakos, Mueller-Hanson, & Arad, 2019). AI cuts through that heap, rooting its evaluations in hard data and objective metrics. It examines important measures like a company’s financial success, market share, and customer happiness to give a detailed view of executive performance (Tambe, Cappelli, & Yakubovich, 2019).

Augmenting Human Cognition and Decision Making

AI is excellent at handling and analyzing large amounts of data, far beyond what humans can do. This allows AI to find patterns and trends that people might not see (Brynjolfsson & McAfee, 2014). In business management, AI can help by looking at market trends, changes in regulations, and public opinion about a company. This gives company leaders a better understanding of their business environment. With this improved knowledge, they can make smarter, and far-sighted decisions. Table 1 presents AI’s potential contribution to corporate decision making.

Exploring the Ethical and Legal Landscape

Addressing Algorithmic Bias and Fairness

When AI algorithms learn from biased historical data, they can continue or even make existing societal biases worse (Crawford & Schultz, 2014). For example, if AI is used to assess how executives perform, it could unfairly judge people from less-represented groups if the training data have been influenced by past biases in promotions and salaries. To address this issue, boards should use strong methods for testing and confirming the fairness of AI to prevent bias. This includes checking how algorithms impact different groups and using machine learning techniques that focus on fairness.

Legal Ramifications and Liability

Decision making using AI has complex and evolving legal implications (Citron, 2008). It is hard to decide who is responsible when an AI system makes mistakes or causes harm. For example, if an AI tool used to assess risk gives wrong predictions and someone loses money, who is responsible for that? Is it the developers who created it, the company using it, or the board that depends on it? We need new laws to handle these questions. One legal issue is creating AI operating rules (AIOR) about who is responsible when AI systems are used to make decisions.

Transparency and Explainability

For AI to gain trust and acceptance in important decision-making meetings, it is crucial that its decision-making process is open, clear, and transparent (Miller, 2019). AI functions like a ‘black box’ providing answers without explaining why, leading to mistrust and ethical issues. Company leaders must insist on Explainable AI (XAI) systems that offer clear reasons for their decisions (Anwar, 2025). This is essential for the board to ensure output reliability by validating the model’s internal logic, rather than simply accepting a generated answer. Techniques such as SHapley Additive exPlanations (SHAP) values or Local Interpretable Model-agnostic Explanations (LIME) can be used to clarify how AI arrived at certain predictions. Furthermore, when AI is used for crucial decisions, it should provide a simple report that explains the steps taken to reach those decisions.

Redefining Board Competency and Governance

Besides the massive application and promotion of AI, many contemporary board members still don’t have a strong grasp of digital or AI concepts, and it’s creating a real gap in governance. This lack of AI understanding slows down trust and makes it tough for boards to oversee AI projects with any real confidence (Chong, Zhang, Goucher-Lambert, Kotovsky, & Cagan, 2022). Do we need to reshape the boards to fix this? That takes time and money—a lot of both. Boards need a quicker fix. Bringing in an AI-savvy executive, like a Chief AI Officer (CAIO), offers a practical solution. The CAIO acts as a translator, breaking down complex technical issues and algorithmic results so the board can actually use those insights to shape strategy. But a critical point we should consider is that leaning too heavily on one expert is not exactly risk-free. Boards can’t just hand over the keys and hope for the best. They need to set clear reporting structures and strong governance rules. The CAIO should advise, not control. This way, the board keeps its independence and fiduciary responsibility, and avoids turning the CAIO into a single point of failure.

The Human-AI Partnership in the Boardroom

Redefining Board Composition and Dynamics

To effectively use AI in a company, board of directors need to adapt. It is important to have members who understand AI and can make sense of information from machine algorithms (Brynjolfsson & McAfee, 2014). This might mean bringing in people who know about data science, AI ethics, and cybersecurity. AI can also change how board meetings work. Meetings can focus more on understanding information, rather than just gathering it.

Building Trust and Collaboration

Building trust between company board members and AI systems is very important for working well together (Afroogh, Akbari, Malone, et al., 2024). This trust comes from making things clear and easy to understand. Everyone should know what AI can do and what it cannot. Boards need to set up rules for how AI is used in making decisions, ensuring that human judgment is always the final say. It is also beneficial to have regular and open conversations between board members and AI experts to discuss concerns and understand how AI is being used.

Developing Frameworks for AI Integration

Organizations should create frameworks to effectively use AI for important decision making. This means defining who does what, setting ethical guidelines, and managing data carefully. Plans need to tackle issues like unfair AI, data privacy, and cybersecurity (European Commission, 2019). For instance, setting up an AI committee can ensure AI is used responsibly in meetings. This committee can develop standard steps to check AI systems and ensure they function correctly and ethically.

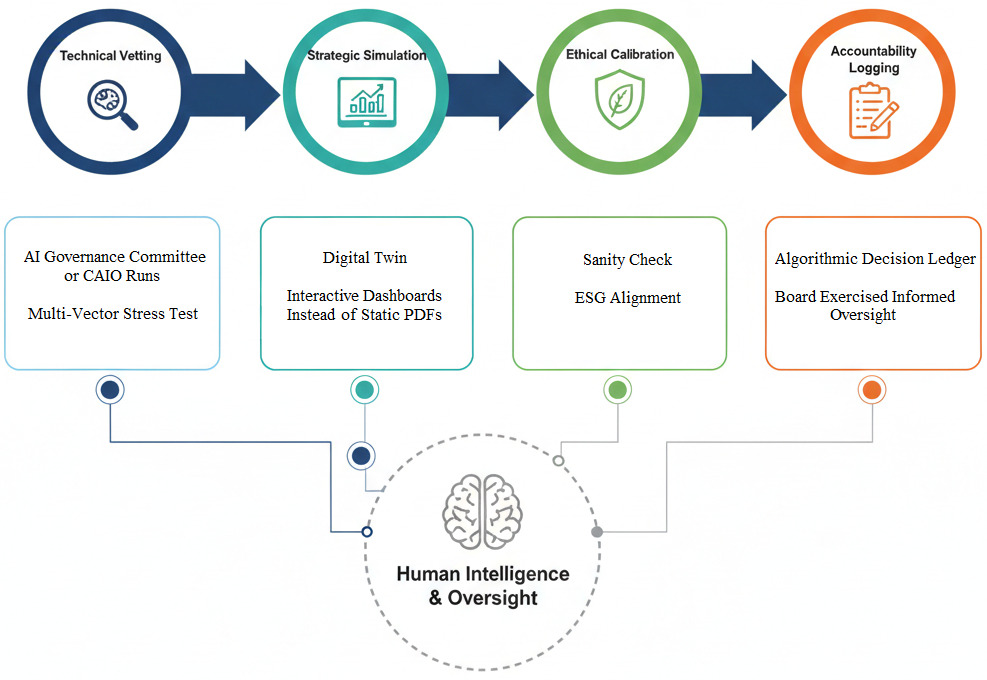

A 4-Pillar Framework for Algorithmic Governance

This framework is proposed for the corporate board members to establish rules to oversight and accountability for AI systems, ensuring they augment, rather than undermine, human governance and fiduciary duty (Kearns & Maksimov, 2025). The first pillar is “policy and principle.” This pillar ensures that the firm’s ethical values are explicitly codified and enforced within the AI decision-making systems. The second pillar is “people and competency.” This pillar addresses the human and organizational requirements, ensuring the board has the necessary skills and structures to govern AI effectively. The third pillar is “process and due diligence.” This pillar mandates the rigorous technical steps and validation required before an AI system can be deployed to inform strategic decisions. Unlike standard IT teams focused on operational vigor, this due diligence involves specialized teams focused on auditing the AI model’s data lineage, checking for algorithmic bias, and stress-testing of the output’s ethical and strategic reliability to manage fiduciary risk. The fourth pillar is “proof and accountability.” This last pillar defines clear lines of responsibility and legal preparedness, addressing the hard questions of liability when AI errs.

To move beyond the “what” of AI capabilities, the corporate board must adopt a four-stage operational process. This ensures that AI-driven insights are vetted, challenged, and ethically aligned before they influence company strategy.

1. Technical Vetting (Pre-Deliberation)

-

Action: The AI Governance Committee or Chief AI Officer (CAIO) subjects the model to a “Multi-Vector Stress Test.”

-

The “How”: Instead of a single check, the committee should use a tri-layered validation approach:

-

Explainability (XAI): They utilize SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) (Anwar, 2025) to identify the top three drivers of the recommendation (i.e., logical drivers, proxies for bias, flawed data drivers). This ensures the “why” is based on logic, not “noise” or biased historical data.

-

Sensitivity Analysis: They “flex” the model by adjusting key market variables (e.g., interest rates, labor costs) to ensure the AI’s output doesn’t become volatile or irrational under pressure.

-

Adversarial Probing: They intentionally input “noisy” or edge-case data to see if the model remains stable.

-

Outcome: If the model fails any layer—by showing bias in its drivers or fragility in its logic—the insight is flagged and remediated before it ever reaches the boardroom.

2. Strategic Simulation (The Board Meeting)

-

Action: The board engages with a “Digital Twin” of the company.

-

The “How”: Instead of viewing a static PDF, board members use interactive dashboards to adjust variables (e.g., “What if energy costs rise by 15%?”). This moves the board from passive data consumption to active strategic inquiry.

3. Ethical and Qualitative Calibration (Human-in-the-Loop)

-

Action: The board applies the “Sanity Check.”

-

The “How”: Directors evaluate the AI’s “most efficient path” against the company’s Environmental, Social, and Governance (EGS) goals and brand reputation. This is where human judgment—which considers non-quantifiable stakeholder nuances—overrides or refines the algorithm.

4. Accountability Logging (The Audit Trail)

-

Action: Formalizing the Algorithmic Decision Ledger.

-

The “How”: The board secretary records the AI’s recommendation, the specific human interventions/overrides, and the final rationale. This operational step provides a legal “safe harbor” by proving that the board exercised informed oversight rather than blind reliance.

Figure 1 presents the operational process.

The Future of Algorithmic Governance

Overcoming Resistance to Change

Bringing AI into boardrooms might be met with some pushback. This can happen because people fear they might lose their jobs or simply because they do not understand AI (Ford, 2015). There is also a fear of the unknown, which makes people cautious. To deal with these worries, corporate boards should focus on educating and training their members. It is important to clearly show how AI can be helpful and stress that working alongside AI is important to meet the dynamic corporate environments.

The Evolving Role of the Board

AI is rapidly changing the work of boards of directors by shifting their focus from data analysis to strategic interpretation and ethical oversight. Boards must learn new skills to use AI well. They have a key role in ensuring AI is used responsibly (Manyika et al., 2017). For example, they must examine the moral aspects of AI algorithms and ensure decisions made by AI align with the organization’s values.

Human or AI: Who Is the Boss?

An important problem, corporate boards should focus, is that automated systems might take away human control and freedom. If we depend too much on these systems, we might lose good decision-making skills and critical thinking. Danaher et al. (2017) mention that when algorithms control things, they make all the rules. This situation can limit human decision making and lead to people being affected by decisions from machines that are hard to understand or question. It is essential for corporate boards to have a good mix of AI system insights and human judgement to manage things well. I believe, humans should be the boss not the bots.

Algorithmic Governance: Challenges and Opportunities

Algorithmic governance involves both problems and opportunities. Algorithms can make processes more efficient and support decisions with data (Anwar, 2025). However, they also introduce important concerns. In Table 2, I provide a few challenges and opportunities.

Key Takeaways for Corporate Board Members

Using AI in corporate decision making can bring big opportunities and also some difficulties for board members. Below are some important points for boards to think about as they deal with these changes:

-

Understanding AI Fundamentals: Board members are not required to be experts in artificial intelligence, but they should have a good grasp of the fundamental ideas. It is important for them to know what AI can achieve, where its limits are, and what kinds of risks it may present.

-

Developing an AI Governance Framework: Board members should set up straightforward rules and policies to ensure AI is used ethically and responsibly.

-

Risk Management and Compliance: Corporate boards must Identify and reduce possible risks related to AI, like bias, data privacy concerns, and security issues (Weismann, 2024). They have to update their knowledge about new AI rules and make sure to follow them carefully.

-

Promoting AI Literacy: Companies should provide training and educational sessions to help board members and employees better understand AI. Promoting a habit of continuous learning, so everyone stays informed about the latest advancements in AI technology, can be helpful.

-

Ensuring Ethical AI Practices: When developing and using AI, always make ethical choices. Ensure that AI decision making is fair, clear, and responsible.

-

Oversight of AI Strategy: Board members should ensure that the AI projects line up with the company’s main business goals. This helps keep everyone on the same track and makes the most of the AI’s benefits.

-

Data Security and Privacy: Focus on keeping data security and privacy as top priorities since AI systems depend a lot on data. This is distinct from general risk management as AI introduces specific regulatory and reputational liabilities related to the use of sensitive training data and potential leakage within algorithmic outputs.

Conclusion

In the present technologically advanced era, the Algorithmic Boardroom is an inevitability, not a choice, making the study of its governance an immediate imperative. Present insight concludes that leveraging AI’s unique capabilities, from identifying hidden risks and conducting dynamic strategic forecasting against “black swan” events (Taleb, 2007) to strengthening human cognition with real-time, unstructured data (Brynjolfsson & McAfee, 2014; Secundo, Shams, & Nucci, 2021), is essential for corporate success. However, successfully integrating AI is fundamentally a challenge for contemporary corporate governance structures, not a technical one, as AI introduces unique liabilities related to algorithmic bias, legal uncertainty (Citron, 2008), and the “black box” problem (Anwar, 2025; Miller, 2019). Consequently, boards must shift their focus from mere data collection to strategic interpretation and ethical supervision.

About the Author

Ch. Mahmood Anwar (PhD) is a research consultant, editor, author, entrepreneur, and HR and project manager. His research interests include critical analysis of published business research, research methods, new constructs development and validation, theory development, business statistics, business ethics, and technology for business. His work has been published in highly prestigious outlets such as California Management Review – Insights, Retraction Watch, Scholarly Kitchen, Reviewer Credits, EditorsCafe (Asian Council of Science Editors), Scholarly Criticism. For more details, please visit: https://driveinmalaysia.com/about-us/